AI is becoming smarter every day, but the fundamental question remains: Who does this intelligence serve, and what real change does it create?

NAVER Cloud approaches this question head-on. Our goal isn’t simply to build increasingly powerful models—we start by understanding the daily lives and contexts of real people, then layer technology on top of that foundation. At our research labs, we go beyond demos. We design models alongside field users and test them in real-world environments, using data to confirm what truly helps and to identify the problems that need solving.

Our mission is straightforward: create technology that isn’t just more powerful, but more beneficial—technology that opens opportunities to more people. Inclusive AI is our methodology for delivering on that promise, transforming it from experiment into lived experience.

What is inclusive AI?

Inclusive AI means technology that reaches populations previously underserved—older adults, children, people with disabilities, patients, and anyone else marginalized due to lack of market appeal. It means supporting diverse modes of expression: voice and text, sound and gesture, screen-based and beyond. We want to embrace every form of human interaction.

Human-computer interaction (HCI) is the field that researches and designs how people and technology meet. We ask: “Who can access this technology comfortably, and in what contexts?” Then we verify those answers in real-world settings. In essence, HCI transforms technology from “possible” to “helpful,” and inclusive AI scales that helpfulness to reach everyone’s daily life.

Case 1. AACessTalk: “The moment conversation started”

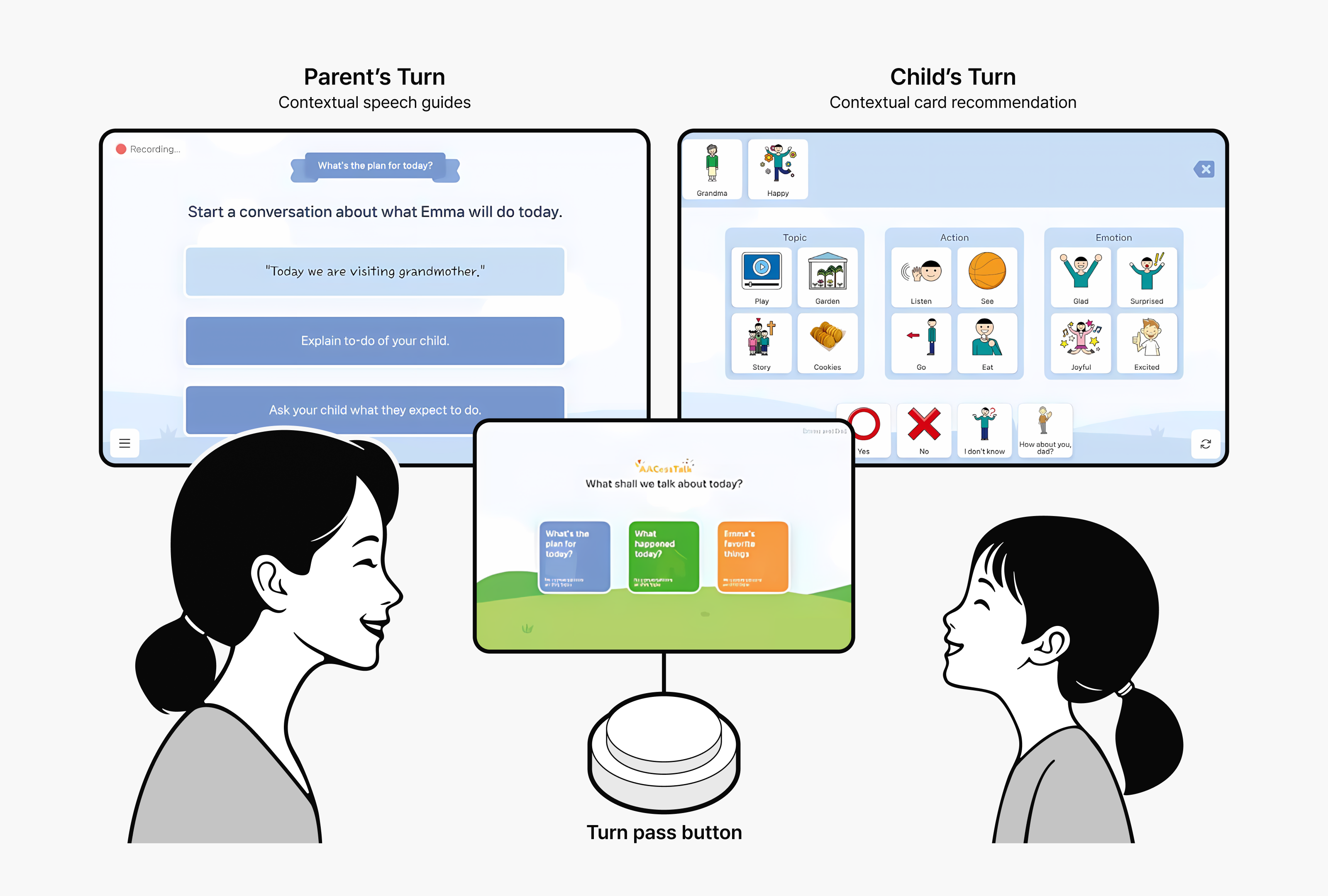

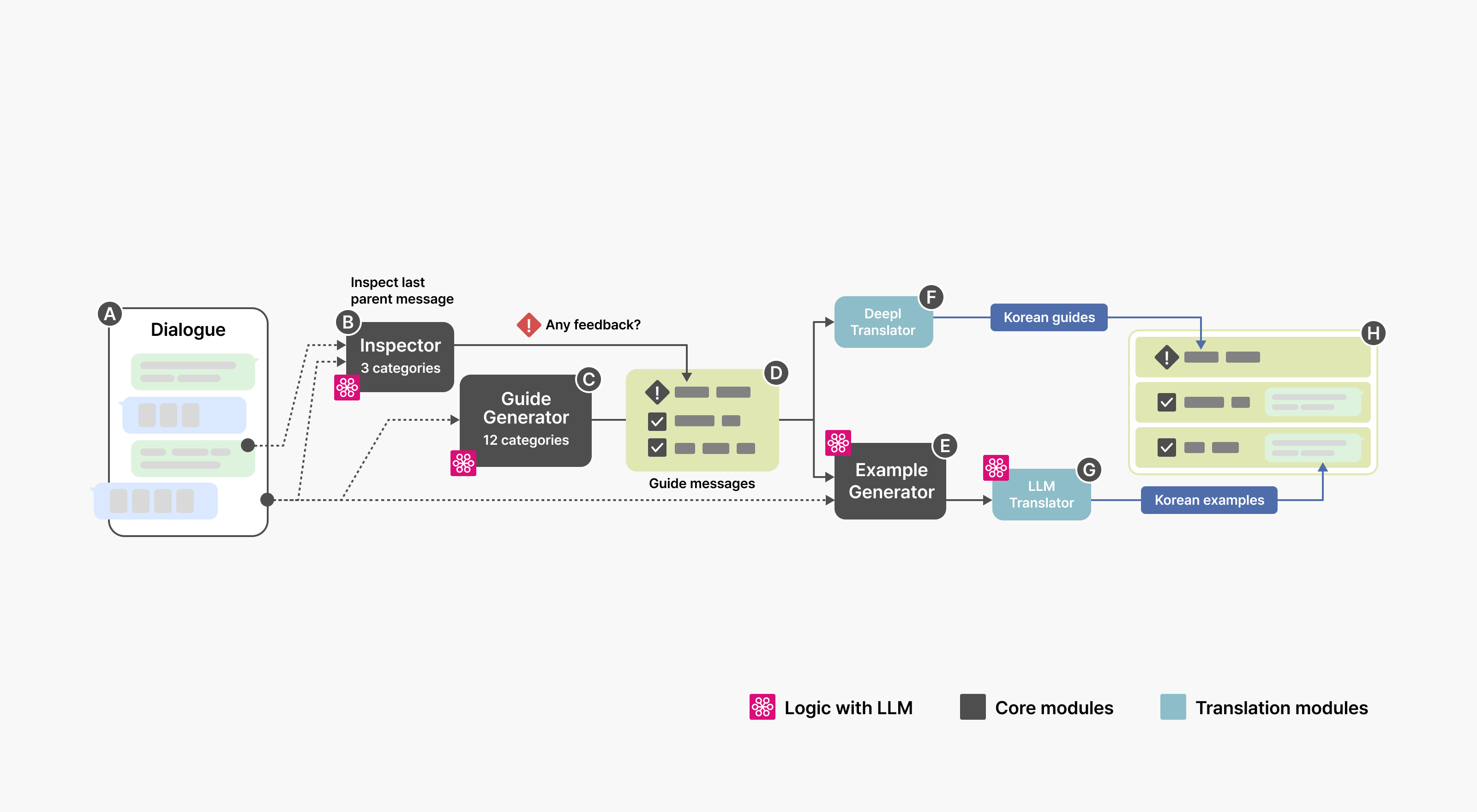

For nonverbal or minimally verbal children with autism and their parents, conversation requires more than good intentions. Traditional augmentative and alternative communication (AAC) tools rely on pre-selected word cards, which help communicate basic needs but struggle to support the natural, spontaneous flow of everyday conversation.

AACessTalk addresses this limitation directly. By analyzing conversational context in real time, it suggests the word cards a child needs “right now” while offering context-specific conversational guidance to parents. By connecting “what to say” with “how to lead” on a single screen, it moves beyond simple request-and-response exchanges to help build genuine conversational flow.

[Figure 1: Generative pipeline for parental guidelines. The pipeline analyzes the current dialogue (A) and generates parental guidelines with example messages (H)]

[Figure 1: Generative pipeline for parental guidelines. The pipeline analyzes the current dialogue (A) and generates parental guidelines with example messages (H)]

We saw meaningful change in the field. Over two weeks, 11 families using AACessTalk recorded 232 conversations, during which children independently selected 2,244 word cards. Many parents reported that “it felt like having a real conversation with my child for the first time.” One parent shared: “When I saw my child express themselves with an unexpected word, I realized the language capabilities that had been there all along.”

The research earned recognition too—AACessTalk received the Best Paper Award at ACM CHI 2025, the world’s premier HCI conference, an honor given to only the top 1% of submissions. But the true value wasn’t the award—it was the change we witnessed in these families. We demonstrated through data how technology can transcend efficiency to unlock deeper understanding and connection.

Reference paper:

“AACessTalk: Fostering Communication between Minimally Verbal Autistic Children and Parents with Contextual Guidance and Card Recommendation,” by Dasom Choi, SoHyun Park, Kyungah Lee, Hwajung Hong, and Young-Ho Kim.

ACM CHI 2025 (Best Paper Award)

Case 2. ChaCha: Talking through emotions with a chatbot

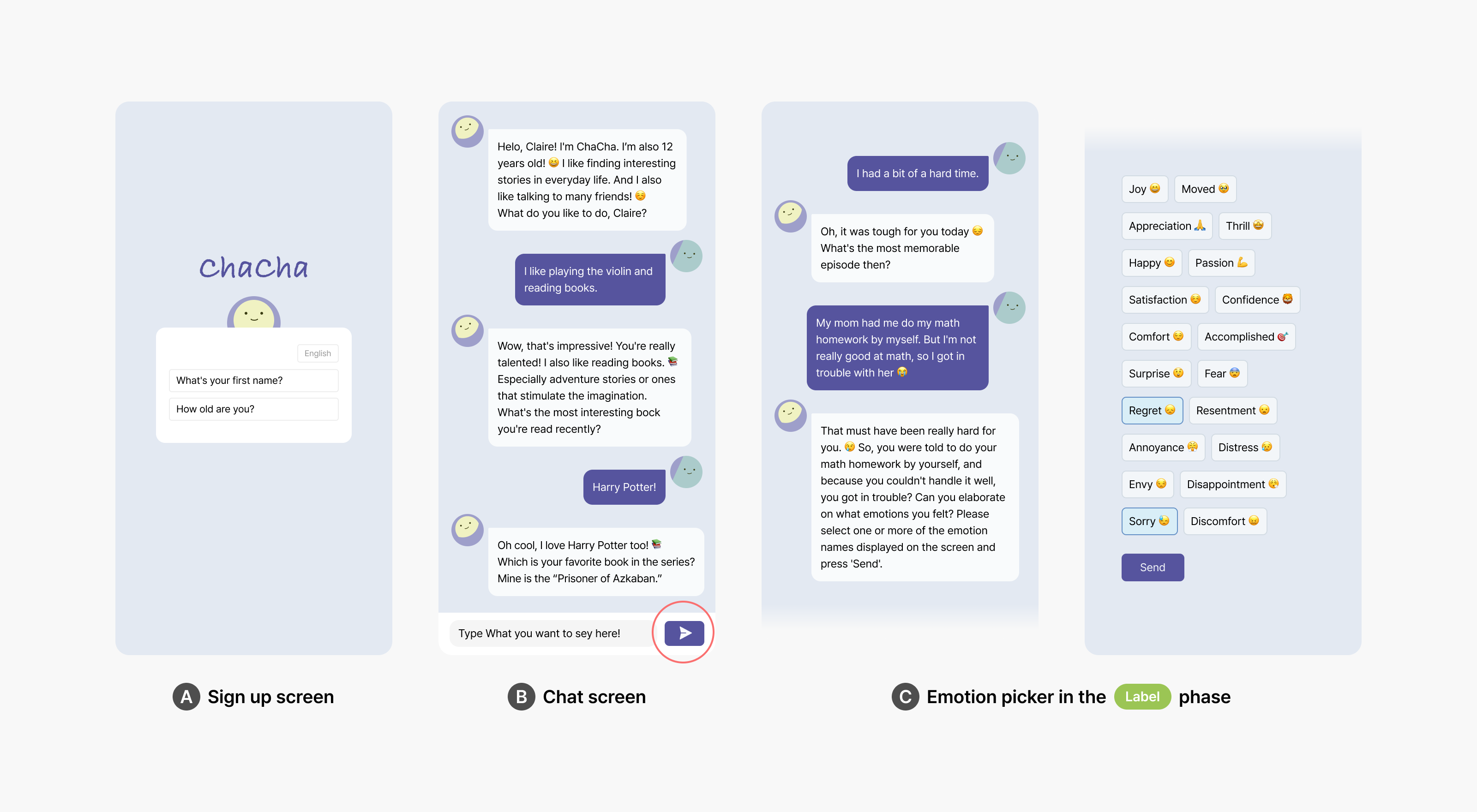

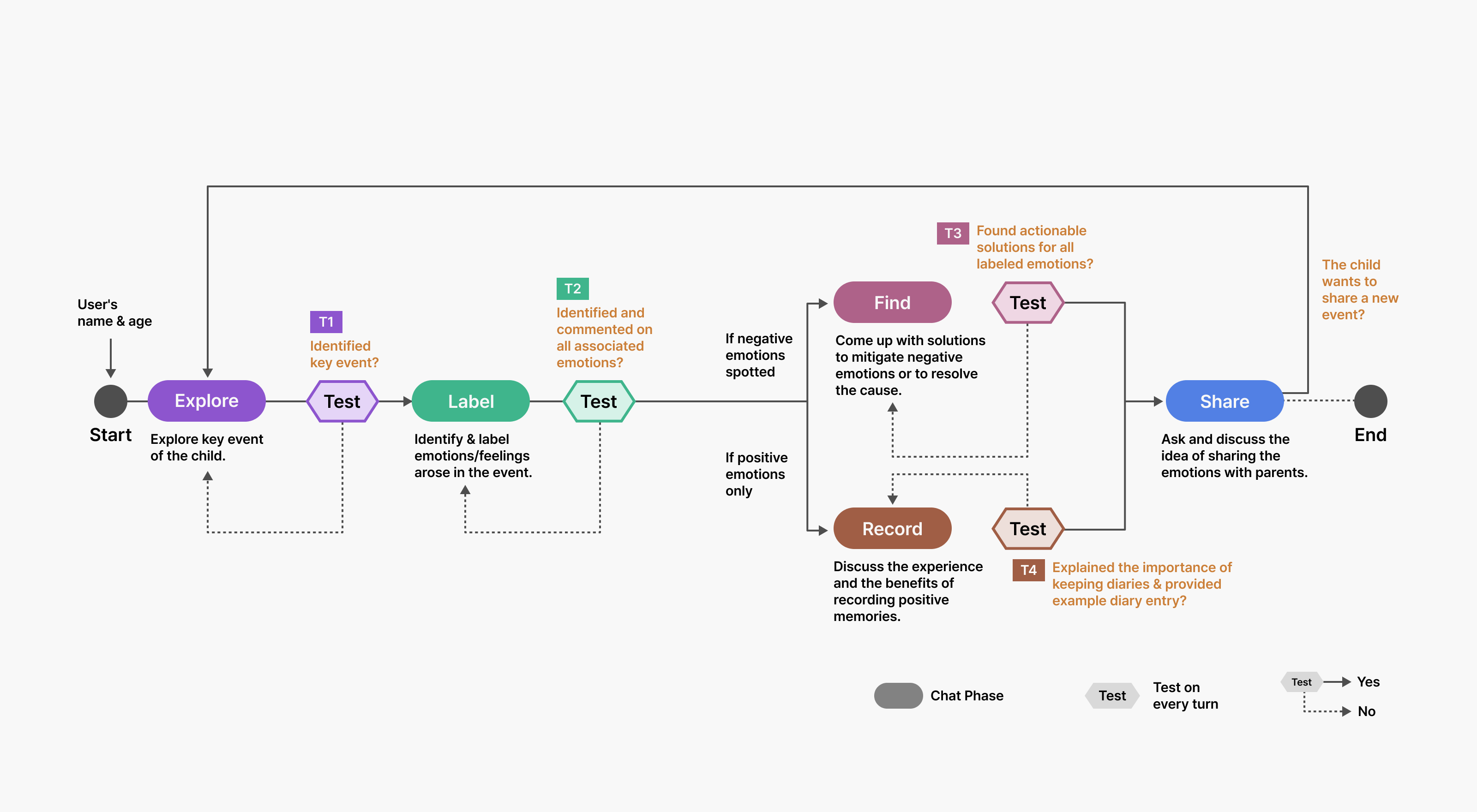

Inclusive AI and human-computer interaction (HCI) are used for children to practice emotional conversation. Emotional intelligence requires practice—specifically, the ability to identify feelings and express them in words. When children experience upset at school, frustration after fighting with a sibling, or disappointment when expectations aren’t met, they need opportunities to name and articulate these emotions. But creating these practice moments isn’t easy for parents or children.

Our HCI team developed ChaCha, a free-conversation LLM chatbot designed to facilitate emotional practice. When a child shares something like “A friend pushed me during P.E.,” ChaCha helps them label the emotion: “Were you surprised, or did it feel unfair?” The chatbot then suggests concrete response strategies—taking a breath, reframing the situation—and guides them toward constructive action: “What could you say to your friend tomorrow?”

User testing revealed clear benefits. While adhering to pre-designed safety guidelines, ChaCha flexibly responded to what children said, creating conversations that felt natural. Many children described ChaCha as “a friend my age.” What they hesitated to tell their parents, they shared more freely with the chatbot, building small successful experiences in expressing, understanding, and managing their emotions through repeated practice.

User testing revealed clear benefits. While adhering to pre-designed safety guidelines, ChaCha flexibly responded to what children said, creating conversations that felt natural. Many children described ChaCha as “a friend my age.” What they hesitated to tell their parents, they shared more freely with the chatbot, building small successful experiences in expressing, understanding, and managing their emotions through repeated practice.

[Figure 2: The overview of conversational phases of the ChaCha dialogue system and transition rules among them. Each time the user enters a message, the system inspects the entire dialogue history by performing a test corresponding to the current phase to decide whether to proceed to another phase or stay]

[Figure 2: The overview of conversational phases of the ChaCha dialogue system and transition rules among them. Each time the user enters a message, the system inspects the entire dialogue history by performing a test corresponding to the current phase to decide whether to proceed to another phase or stay]

Reference paper:

“ChaCha: Leveraging Large Language Models to Prompt Children to Share Their Emotions about Personal Events,” by Woosuk Seo, Chanmo Yang, and Young-Ho Kim.

ACM CHI 2024

Case 3. MindfulDiary: Bridging the gap between medical appointments

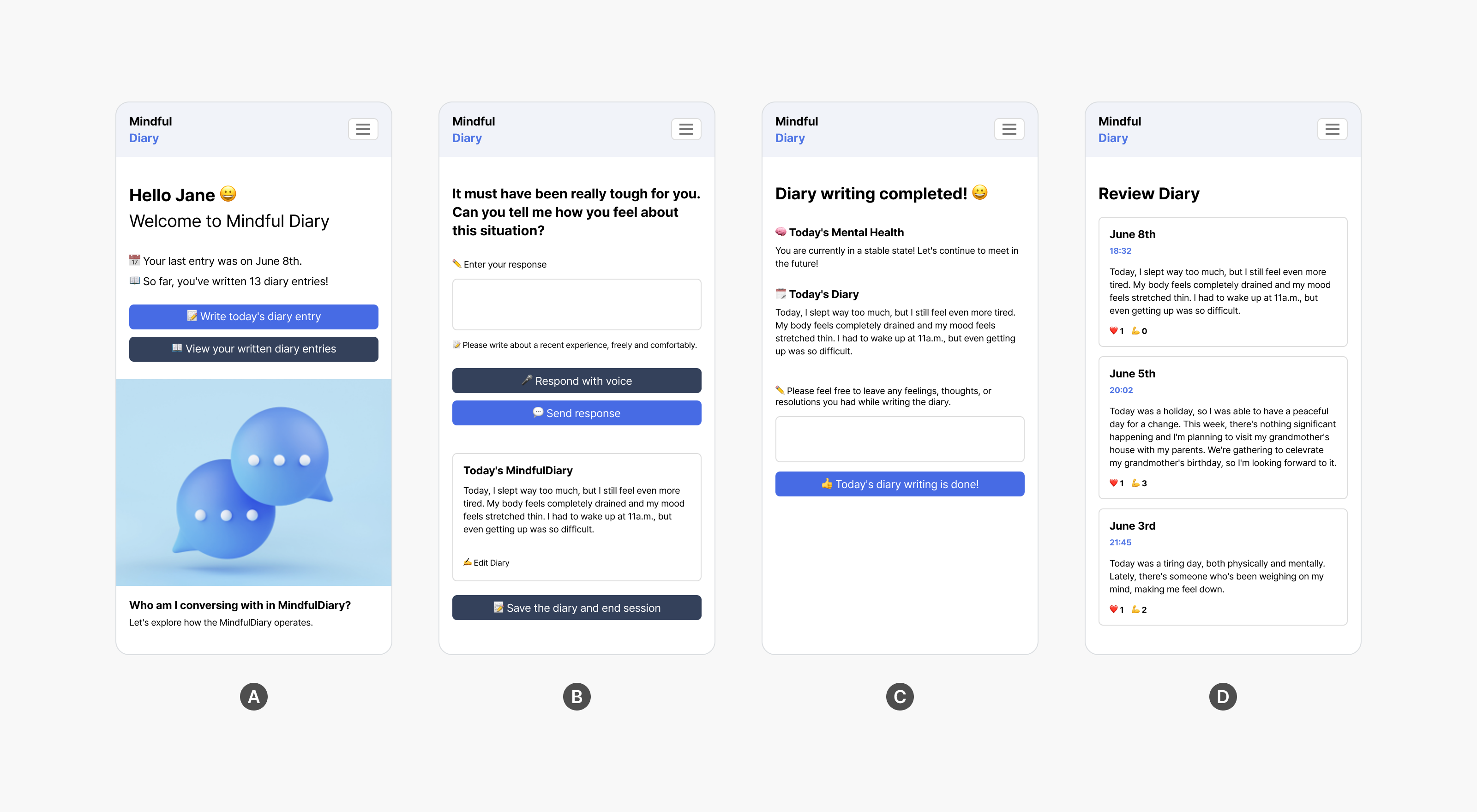

Inclusive AI and HCI also work quietly in less obvious spaces—like filling the gaps between medical appointments. Psychiatric treatment typically involves appointments spaced weeks apart. When patients rely on memory alone to recount what happened during that interval, critical context often gets lost. While keeping a daily journal could help patients share their experiences outside the hospital, the effort of writing can feel overwhelming.

[Figure 3: Main screens of the MindfulDiary app. (a) The main screen, (b) the journaling screen, (c) the summary screen shown when the user submits the journal dialogue, and (d) the review screen displaying the user’s past journals]

MindfulDiary addresses this challenge by allowing patients to talk briefly with an AI in casual, everyday conversation. The system automatically structures key information—mood changes, sleep patterns, interpersonal stress—reducing the recording burden while generating concise summary reports for medical staff. This enables more accurate and substantive conversations during the next appointment.

In hospital pilot testing, teenage patients engaged consistently with the app, and the accumulated data helped clinicians identify critical patterns, including early warning signs of suicide risk. Medical staff reported that reviewing these everyday AI conversations revealed moments of joy and candid thoughts that patients wouldn’t typically share in clinical settings. This deeper understanding fostered greater empathy and ultimately led to more effective treatment. In essence, MindfulDiary transformed the disconnect of “treatment-gap-treatment” into a continuous, connected narrative.

Reference paper:

“MindfulDiary: Harnessing Large Language Model to Support Psychiatric Patients’ Journaling,” by Taewan Kim, Seolyeong Bae, Hyun Ah Kim, Su-woo Lee, Hwajung Hong, Chanmo Yang, and Young-Ho Kim.

ACM CHI 2024

Case 4. CareCall: Redesigning check-ins

Regularly checking in on older adults living alone or those who need care—asking how they feel, watching for subtle signs of change—seems straightforward in theory. In practice, it’s far more complex. Repetitive questioning erodes trust, while missed warning signs render the entire effort meaningless.

CareCall redesigns the check-in experience by addressing both challenges. By remembering essential details from previous calls—medication adherence, sleep patterns, meals—it sustains natural conversation flow. Instead of asking binary questions like “Did you eat?” it poses respectful, open-ended ones: “How has your appetite been lately?” This approach surfaces changes more effectively. Safety mechanisms are built in—when warning signs emerge, the AI immediately connects the person to a human consultant.

CareCall isn’t “an AI that provides correct answers”—it’s an AI that nurtures relationships. It remembers what people share, asks questions with care, and knows when human intervention is needed. When these elements combine, a single phone call becomes care infrastructure.

Related posts:

- CLOVA CareCall research paper 1: Benefits and challenges of deploying LLM chatbots for public health intervention (CHI 2023)

- CLOVA CareCall research paper 2: Chatbots’ long-term memory and self-disclosure (CHI 2024)

Our path forward

At NAVER, we approach AI through an HCI lens—not by building “smarter models,” but by creating tangible change in real-world environments. We’re committed to expanding the reach of inclusive AI, making technology accessible to more users. This means designing AI in the field, testing rigorously, identifying genuine problems, and solving them.

NAVER Cloud is putting inclusive AI into practice—lowering barriers for those who previously faced difficulty accessing technology, making tomorrow meaningfully better than today. To make these experiences standard rather than exceptional, we’ll continue turning good intentions into measurable impact.

Learn more in KBS N Series, AI Topia, episode 3

You can see all of this in action in the third episode of KBS N Series’ AI Topia, “Inclusive AI for underserved populations: HCI research and CareCall.” NAVER Cloud research scientist Kim Young-Ho, leader of the HCI Research Group at NAVER AI Lab, breaks down these ideas with clear examples and helpful context. It’s a great way to get a fuller picture of what we’ve covered here!